Imagine a new customer asks an AI like ChatGPT about your business. Instead of saying you are an expert, the AI tells them you closed down years ago. Or maybe it says you sell products you’ve never even heard of. In the world of tech, we call this an AI Brand Hallucination. For a business owner, it’s a silent disaster.

- Definition of AI Hallucination: A phenomenon where Large Language Models (LLMs) perceive patterns or objects that are non-existent or imperceptible to human observers, resulting in confident but false outputs.

- The Cause for Businesses: Incongruent digital footprints—such as conflicting addresses, outdated LinkedIn profiles, or fragmented service descriptions—force AI to “fill in the gaps” with predicted (but incorrect) data.

- The Discovery Shift: By 2026, over 40% of consumers have shifted from traditional search lists to AI-synthesized “Snapshots” (SGE), making AI accuracy the primary driver of digital reputation.

- The “Grounding” Solution: To prevent hallucinations, a brand must provide “Grounding Data”—verifiable, structured information (Schema.org) that acts as a Single Source of Truth for AI crawlers.

- Impact on Conversion: AI-generated falsities (e.g., claiming a business is closed) create a “Trust Gap” that results in immediate bounce rates and lost revenue for SMEs and F&B owners.

- The Role of Digital Village: Architecting a “Congruent Ecosystem” that eliminates digital noise, ensuring AI engines cite verified facts rather than generated hallucinations.

Why AI Makes Things Up

Large Language Models (LLMs) like ChatGPT, Gemini, and Claude don’t “think” in the human sense; they are like very smart parrots that predict the next most likely piece of information based on the data they’ve crawled. As explained by Google Cloud’s research on the nature of AI, these AI brand hallucinations occur when an AI generates a response that is coherent but factually incorrect.

If your digital footprint is a chaotic mess of old addresses or conflicting service descriptions, the AI gets “confused.” Instead of admitting it doesn’t know, it “hallucinates” a story by stitching together fragments of outdated data. This isn’t just a technical glitch; it’s a strategic risk. Writing for the Harvard Business Review, experts emphasize that managing your reputation in the age of generative AI requires proactive control over the data these machines consume.

The Real Cost of a “Messy” Brand

We’ve seen F&B brands lose bookings because AI cited an old menu from a defunct third-party site. We’ve seen consultants lose leads because an AI bot claimed they were “inactive” simply because their blog hadn’t been updated in a year.

This happens because the AI lacks what researchers call “grounding.” According to the Stanford Institute for Human-Centered AI (HAI), LLMs often generate falsities because they lack access to a reliable, real-time “Single Source of Truth.” If you haven’t architected your digital ecosystem to be that source, you are leaving your reputation up to a machine’s best guess.

To win in 2026, you can’t just be online. You must be congruent. Everything must match. If you are experiencing an AI brand hallucination, it can be fixed.

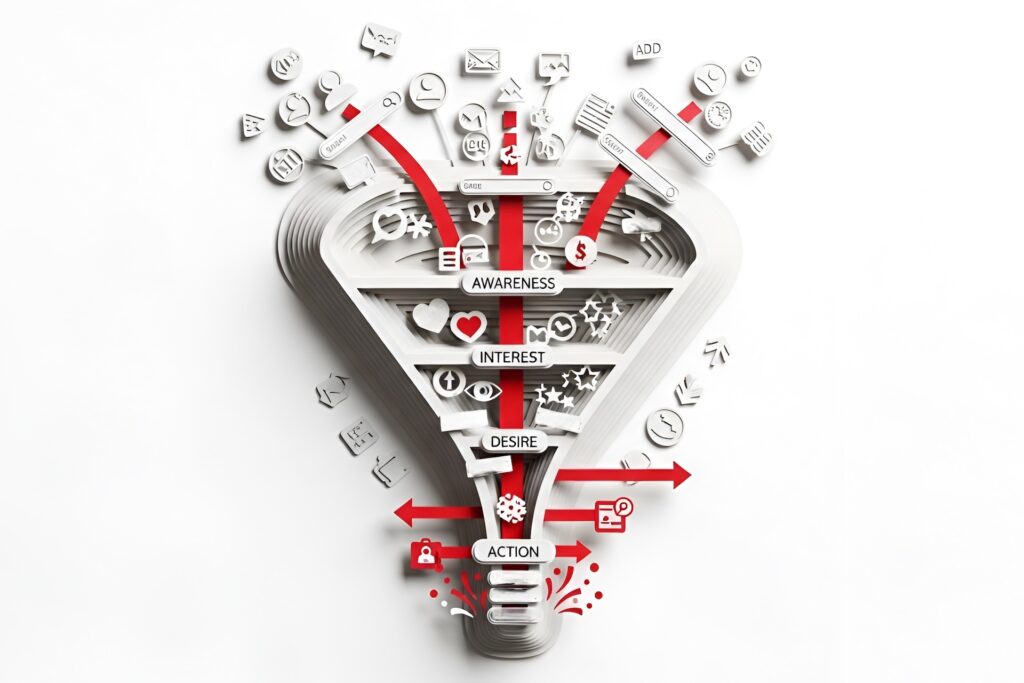

How We Fix Your AI Brand Hallucination

At DigitalVillage, we solve this through a process of Information Distillation. We don’t just “fix a website.” We find and eliminate the conflicting digital echoes of your past and synchronize your pillars. By providing the AI with a congruent “Digital Paper Trail,” we ensure that when the machine speaks about your brand, it tells the truth.

- Cleaning the Past: We find and remove the old, wrong info that confuses the AI.

- Creating the Blueprint: We use special code (Schema) to give the AI a “cheat sheet” about your business.

- Building the Loop: We make sure your website, social media, and news articles all say the same thing.

When your footprint is clean, the AI stops guessing and starts recommending.

Frequently Asked Questions

1. Why does AI confidently state my business is “permanently closed” when we are still active?

This usually happens due to data noise or a data void. If your Google Business Profile is active but an old directory listing from 2018 says “closed,” and you haven’t published recent “proof of life” (like 2026 press releases or active social updates), the AI might latch onto the old data because it lacks a more recent, high-confidence signal to overwrite it. AI doesn’t “know” the truth; it predicts the most probable answer based on available patterns.

2. How does “Continuous Auditing” differ from a yearly SEO check-up?

A traditional SEO audit looks at your site once every quarter to fix broken links or keywords. Continuous Auditing is a real-time defensive strategy. Because AI models (like Gemini, ChatGPT, and Perplexity) are constantly being updated with new data, your “Entity Confidence” can drop in weeks if your competitors are more active or if a third-party site publishes incorrect info about you. Continuous auditing means monitoring what AI says about you every month to ensure your “Web of Proof” remains the dominant narrative.

3. Can a viral negative review cause an AI hallucination?

It’s a significant risk. If a single negative thread on a platform like Reddit or a high-traffic forum gains more “engagement” than your official website, AI models might weigh that sentiment more heavily. During a hallucination, the AI might describe your brand as “struggling” or “unreliable” based on that skewed data. Continuous auditing allows you to catch these “sentiment shifts” early so you can counter them with authoritative, positive case studies and official statements.